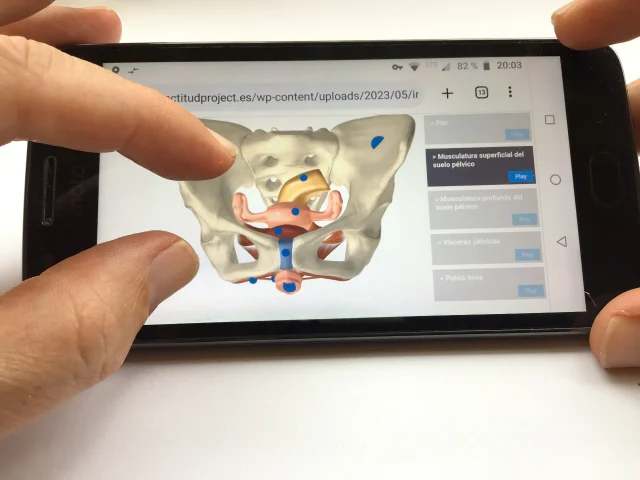

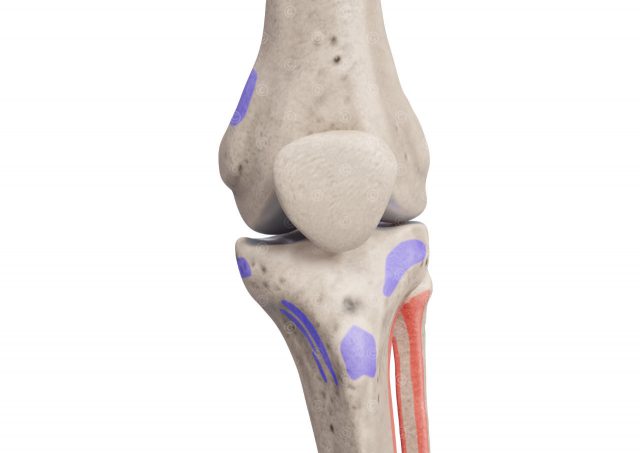

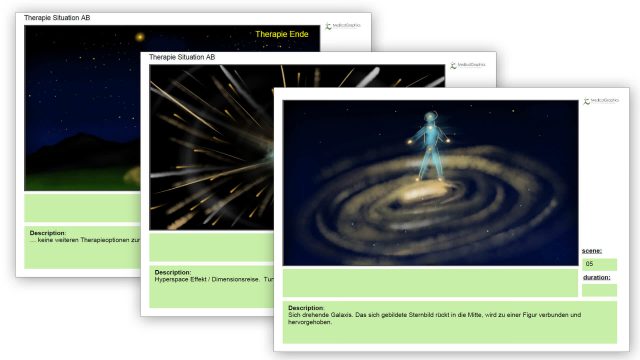

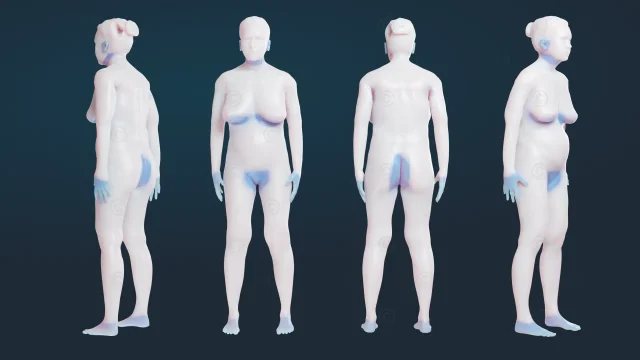

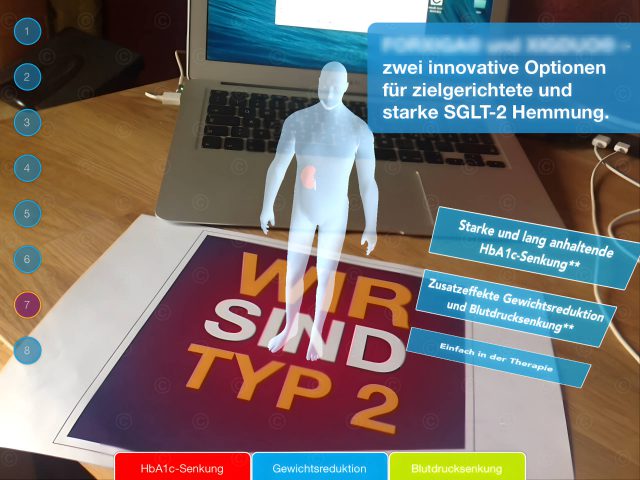

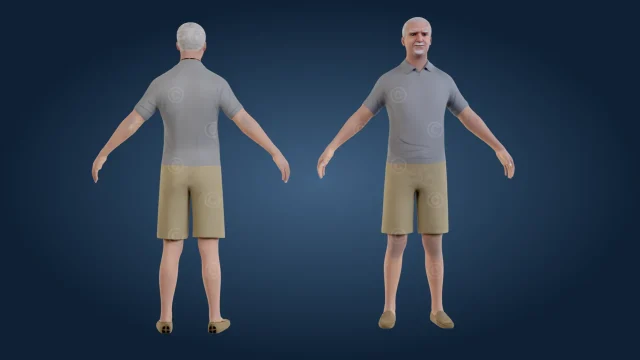

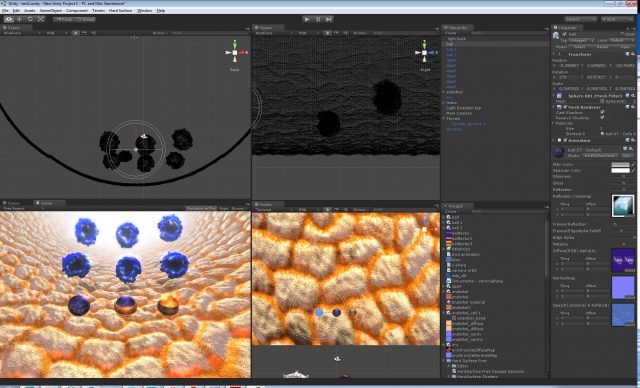

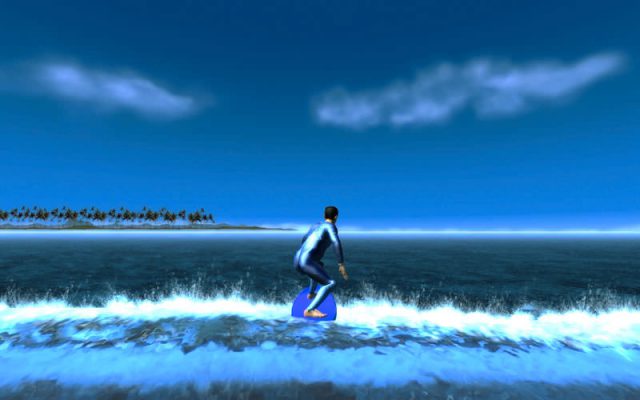

In the context of the application, the body movement and facial expressions of a person were to be transferred to virtual 3D figures (avatars). In this system, an iPad captures the body movement (motion capture) and an iPhone attached in front of the head captures the facial expressions (face tracking). The data was further processed in an external Apple computer with ARKit and transferred to the 3D figures (avatars) in real time. In order to get as close as possible to the two people used in the client’s key visual, we used image material from a photo shoot to design the figures.

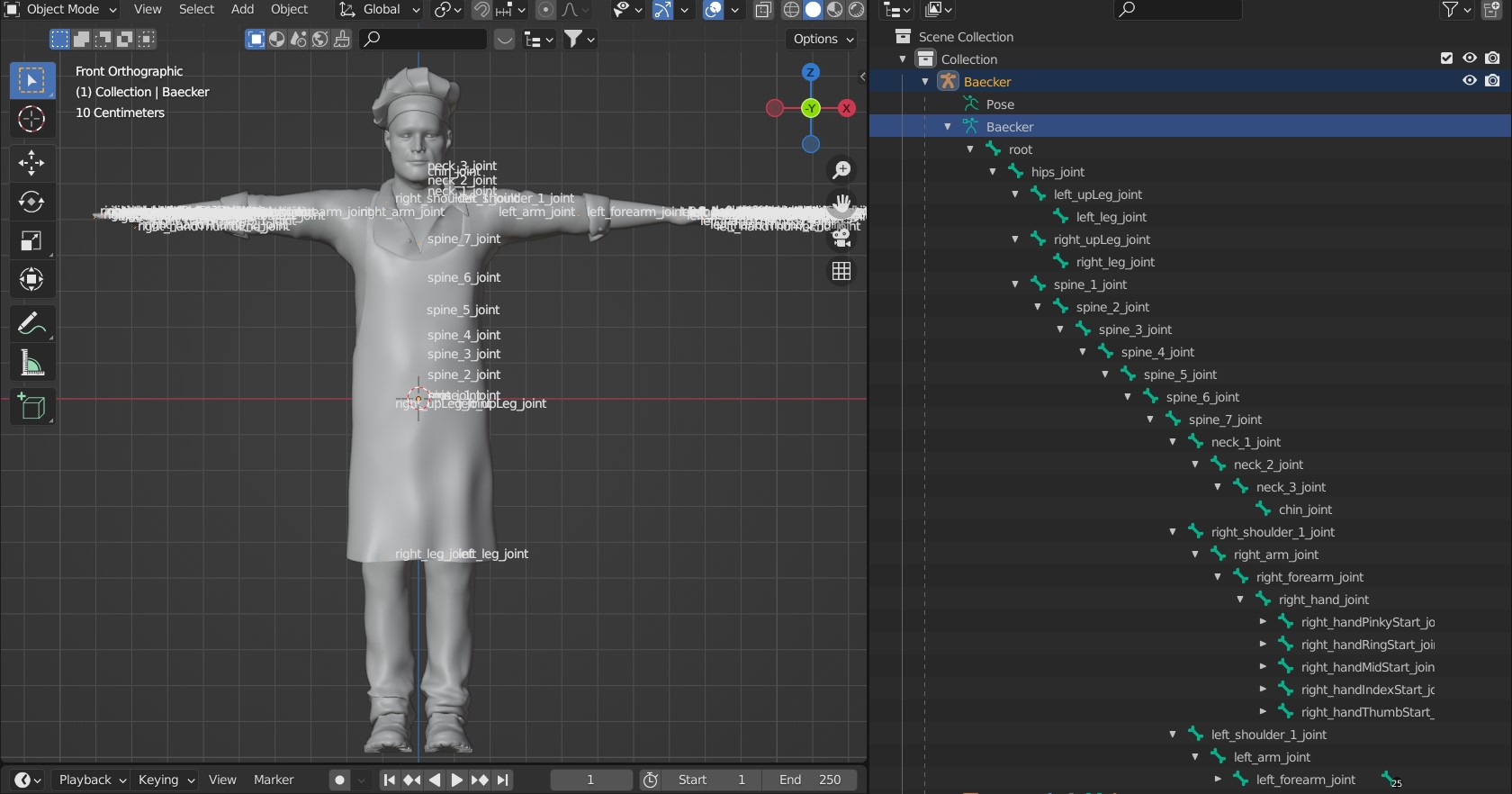

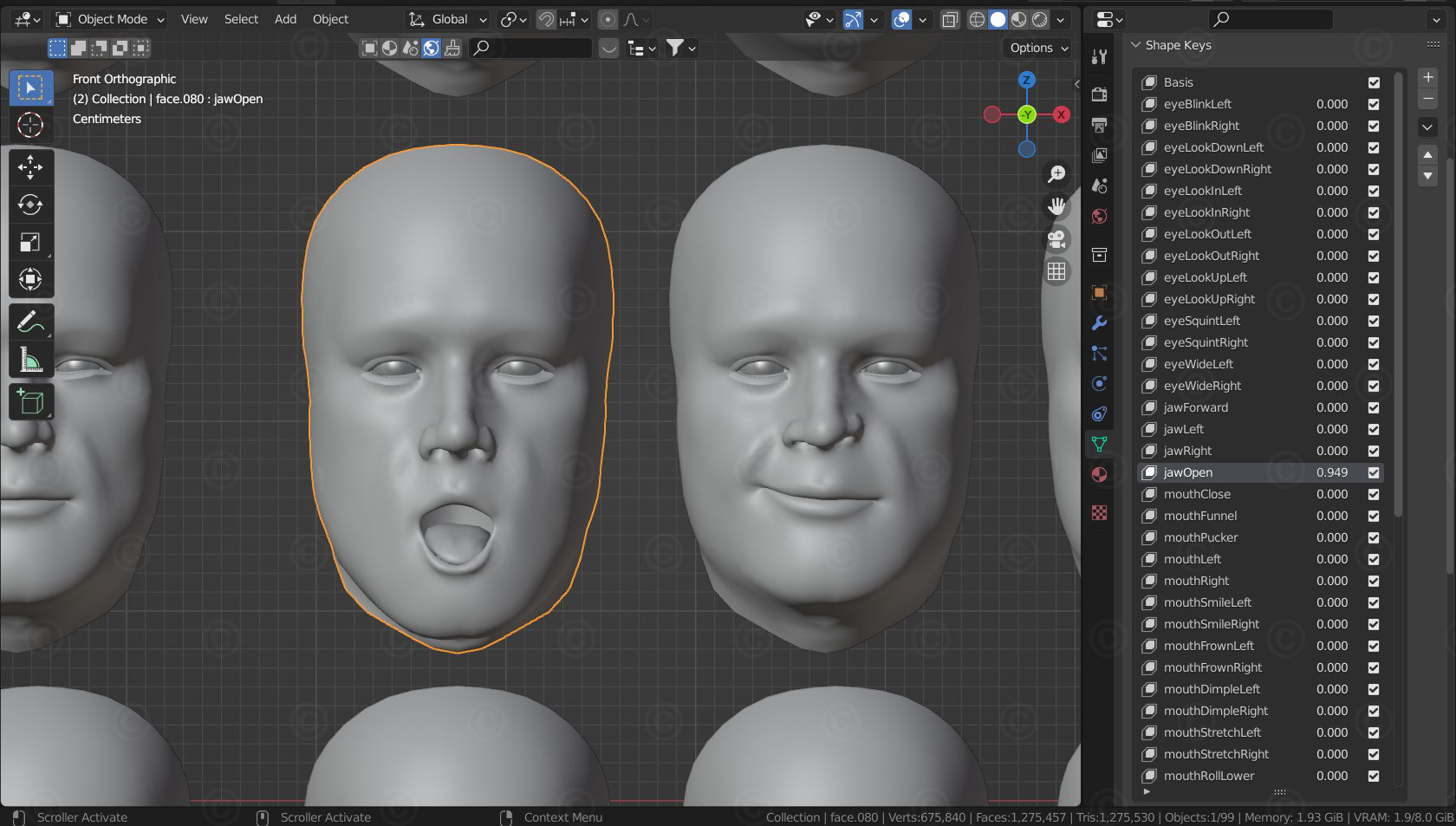

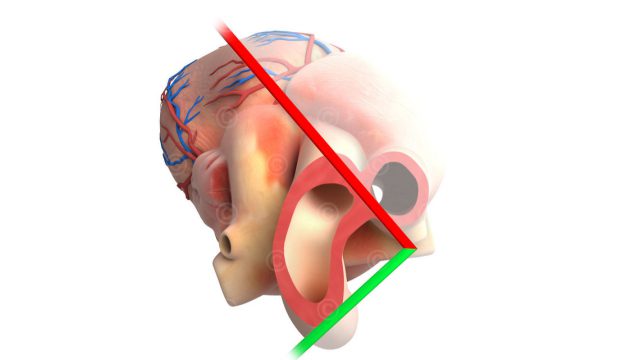

In the next step, the “skeleton” of the figure responsible for the body movement had to be created according to special specifications defined by Apple. For the facial expressions, about 50-60 different “facial expressions” (actually individual movements of parts of the face such as left eye closed, upper lip raised, left corner of the mouth pulled down, etc.) had to be created. ARKit interpreted the videos recorded by the iPhone using an AI (actually a program part created by machine learning or deep learning) and recreated the facial expressions on the virtual character’s face. Since the system mixed dozens of the blendshapes together even for quite simple facial expressions, the challenge was to design them in such a way that the final result did not produce distorted or completely exaggerated poses.

Project details:

Content: 2 characters with bones system and about 55 blendshapes for the face

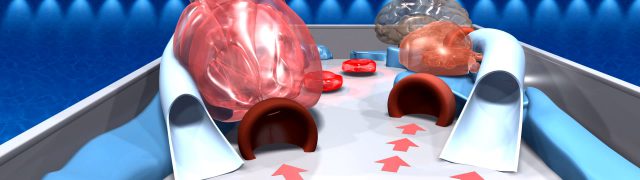

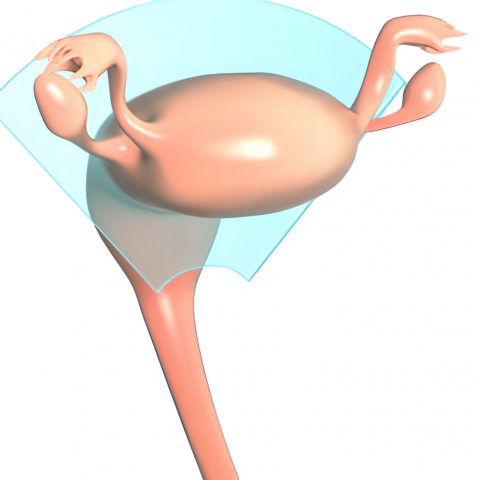

Utilization: App for use at congresses

Specifications: Realization with Apple ARKit and iPhone and iPad

Client: -nda-

The rights of use for the illustrations shown here lie with the client; use is not permitted. The images are protected with watermarks.